The Ugly Language AIs Love

The concept of a Hypothetical Grammarless language

Humans write beautiful-sounding prompts. Machines hallucinate beautiful garbage. What if we gave them something gross instead of a problematic prompt? We can summarize the problems that cause the disconnect..

Short prompts = shallow results

Asking an LLM for a deep answer with no context is like expecting a symphony from a single piano key.LLMs reflect what they’re given

Like political polling — they don’t reveal "truth," they reflect the input’s assumptions, wording, and scope.Most users expect too much from too little

They want genius from vague or lazy input. But LLMs are best used in collaboration — not as oracles.Models vary in reasoning depth and memory shaping

Claude might hallucinate or stall. GPT-4o in this context understands your past inputs, the Sub-Lex system, and can build structured, layered responses like a table — because you gave it context.

1. Enter GROSS

Like many good ideas, GROSS was born out of frustration and as a reply to a LinkedIn Challenge come up with an AI syntax that doesn’t use formal grammar. Natural Language Systems (NLS) — the way most humans communicate with LLMs — are intuitive for people but often misleading to machines. Prompts feel complete to us because we instinctively fill in the blanks. Machines don’t. They either hallucinate, ask for clarification (if they’re polite), or just fail silently.

We needed a language that would highlight missing information, compress semantic intent, and strip away the elegance of English in favor of structured, symbolic meaning. So we conceived something that was deliberately ugly, brutally efficient, and beautifully effective.

Enter: GROSS.

GROSS = Generalized Representational Ontology for Structured Semantics

Yes, it’s a backronym. And yes, it’s perfect.

Concept Summary:

GROSS is a compressed, symbolic intermediary between humans and LLMs. It’s not quite a programming language, but not quite natural language either — it’s optimized for structured semantic intent. Instead of using verbose, ambiguous human phrasing, a GROSS line encodes intent, context, and even tone in a few short symbols or constructs. Think of it as the bytecode of thought.

2. The Problem with Natural Language Prompts

Take this seemingly harmless prompt:

"Write an app that calculates the max speed of a spacecraft circling the sun."

To a human, this feels complete. But it’s missing:

The distance from the sun

The mass of the spacecraft (or the sun)

The initial velocity

The time it’s been in orbit

The LLM is left to guess, improvise, or hallucinate. And in complex systems, that’s dangerous.

Now take another deceptively simple instruction:

"Construct a simple app that pulls information from a database."

Seems fine, right? However, it overlooks critical implementation details, including the choice of language, the constraints to be respected, the platforms to be supported, and the behavior of the UI.

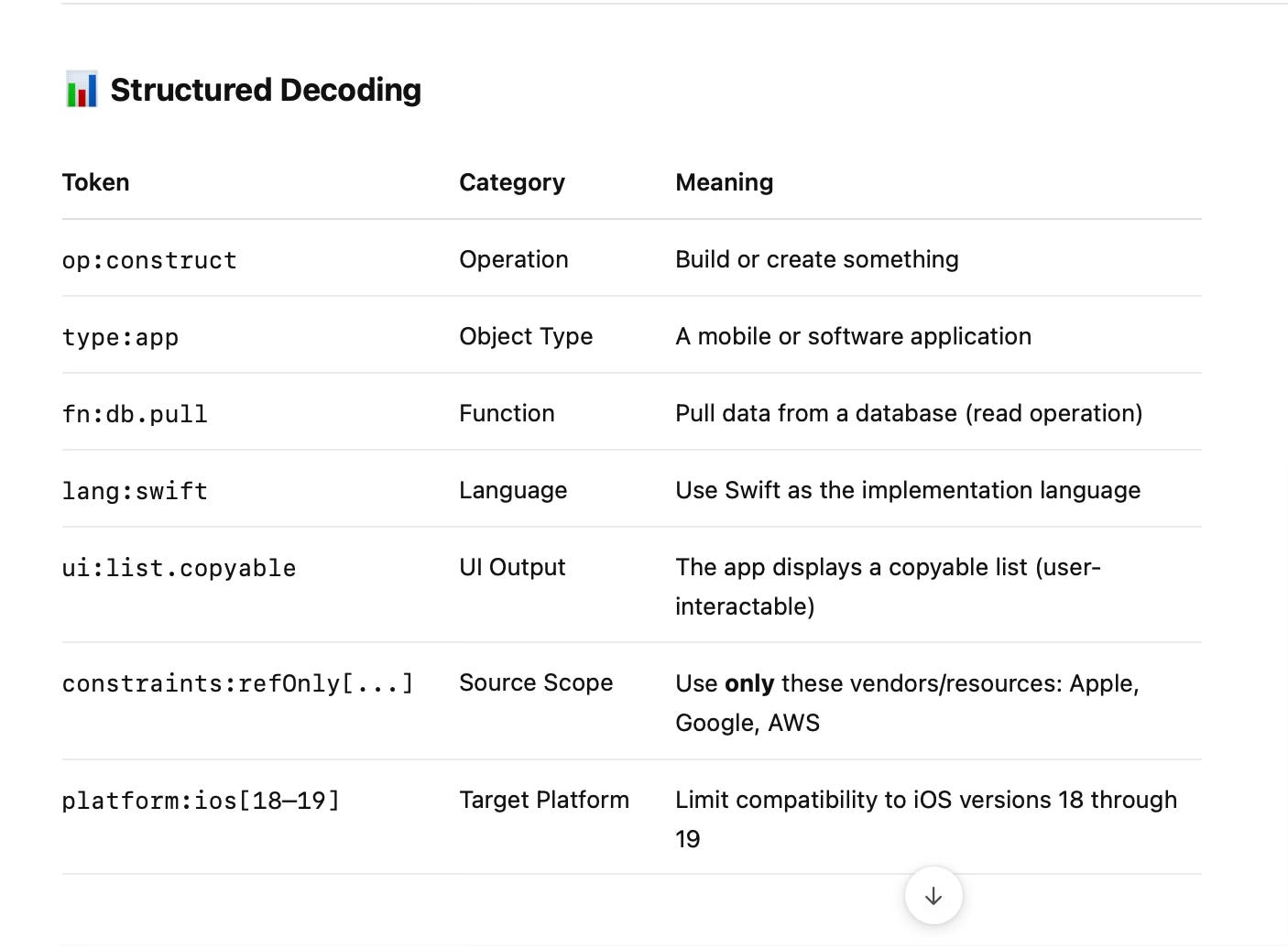

Now let’s write this in GROSS-style shorthand:

A first cut might be , it gives a lot of info but is wordy :

First take:

Construct a simple app that pulls information from a database { lg : swift, constraints (re: Apple, Google, AWS, PLT : iOS 18–19) } list copiable windowEven without full GROSS formatting, it's clear this statement includes language, platform constraints, and UI expectations. And if you were to formalize it in canonical GROSS but this is too much like Natural language to rephrase this in GROSS it could be something like this. clear and concise.

op:construct

type:app

fn:db.pull

lang:swift

platform:ios[18–19]{strict:true}

constraints:refOnly[apple, google, aws]

ui:list.copyableSuddenly, there's no ambiguity:

Why This Is Powerful

This single GROSS sentence carries:

Actionable intent

Constraints (by vendor + platform)

UI hinting

Language binding

Semantic clarity without syntactic noise

And it’s:

Machine-friendly

Human-trainable

Context-light (doesn’t require prior message history to decode)

GROSS makes the implicit explicit.

3. GROSS Reveals What Language Hides

Let’s return to the spacecraft prompt and translate it into GROSS:

op:construct

fn:velocity.max

type:app

subject:spacecraft

body:star.sun

equation: v = sqrt(G * M / r)

variables: { M:?, r:?, G:constant.gravitational }

missing: [M, r]

lang:swiftSuddenly, the gaps are visible. You’re not just requesting velocity, you're asking for a simulation or a physics function that cannot be fulfilled without key variables.

Calculate my company's break-even point.

op:analyze

fn:finance.breakeven

variables: { fixedCosts:?, variableCosts:?, revenue:?, units:? }

missing: [fixedCosts, variableCosts]The same pattern: NLS hides complexity. GROSS surfaces it.

4. GROSS Isn’t a Grammar

There are no syntax trees or compilers. GROSS is flat, symbolic, and deliberately shallow. It uses tokens, scopes, and brackets like tools:

[]means sets or options{}means modifiers or rules<>means encapsulated context

A GROSS prompt is more like a protocol message than a programming language. It’s structured thought.

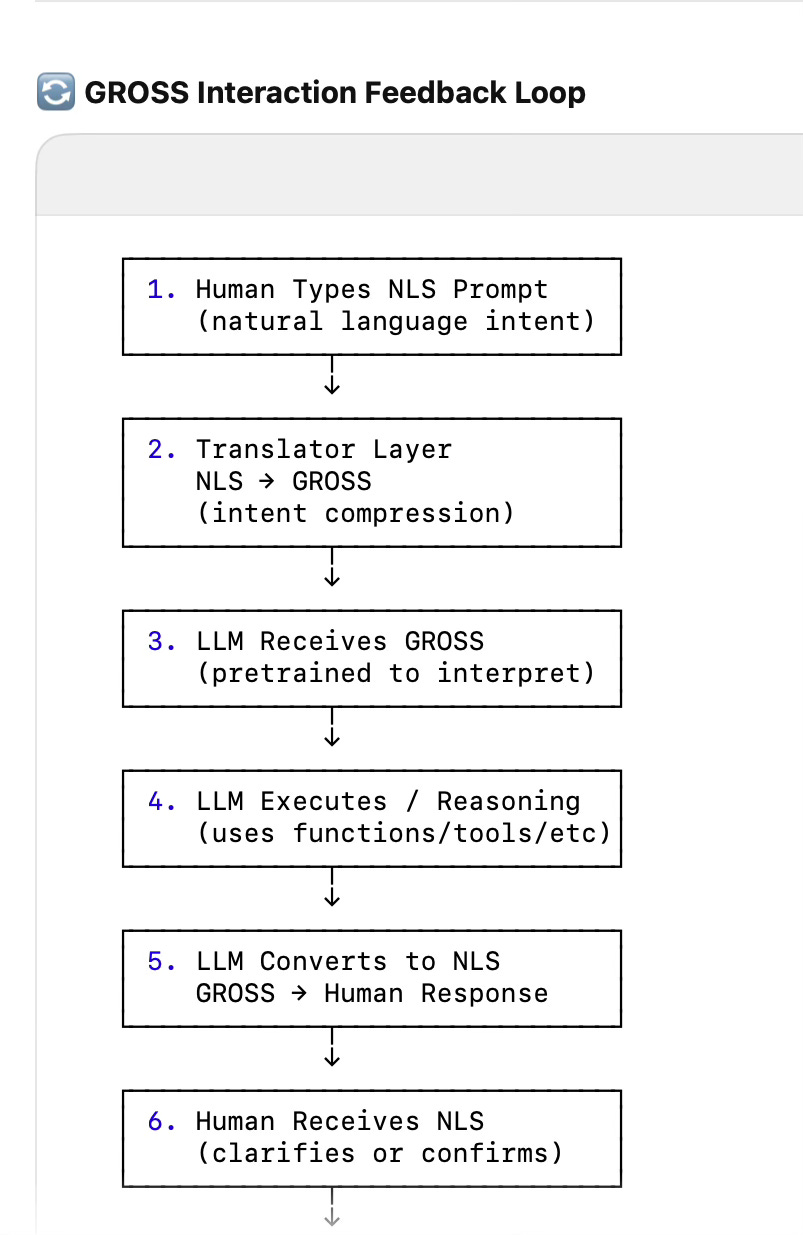

Flow:

User writes in NLS (natural human text)

Translator layer (either rule-based or learned) converts this into GROSS

You (the LLM) are pre-trained or fine-tuned to understand GROSS much more efficiently than NLS

Response is optionally translated back to NLS for human consumption

5. The Feedback Loop

The magic is in the loop:

Example:

NLS Prompt: "Write an app that calculates the max speed of a spacecraft circling the sun."

Translator outputs:

op:construct

fn:velocity.max

variables: { M:?, r:? }

missing: [M, r]LLM responds:

"You're asking to compute max speed, but missing inputs: mass and distance. Do you want to provide them?"

Suddenly, the conversation is grounded, not imagined.

This interaction loop is the foundation for a new kind of AI workflow: one based on shared structure, not guesswork.

6. Skynet, But Friendly

Once one AI understands GROSS, it can teach others. It can teach agents in Chinese. It can teach edge models. It can even teach you.

GROSS is:

Cross-lingual

Cross-modal

Cross-architecture

Hallucination-resistant

It doesn’t need recursion. It doesn’t care about beauty. It just delivers clarity.

This opens the door to AI-to-AI collaboration:

A model trained on GROSS can produce GROSS. Another model, unaware of NLS, can consume it. Cross-language, cross-domain AI pipelines become trivial.

It becomes not just a prompt format — but a machine-language for AI cognition.

GROSS has one promise: never become what it was designed to replace. No deep nesting. No rigid trees. No compiler needed.

Just intent, structure, and symbolic alignment.

If your prompt language needs a debugger, it’s not GROSS anymore.

Who’s

kinda

doing GROSS (but not calling it that)?

1. OpenAI

–

Prompt compression, prompt engineering, and system instruction tuning

Their function calling schema (JSON-based) is effectively Tier 2 GROSS.

Their Assistant API uses structured inputs and intent parsing behind the scenes.

But: no open symbolic language layer for general-purpose, compressed semantic interfacing.

2. Microsoft

–

Copilot integration & agent orchestration

Their Autogen system supports agent communication with structured formats.

They’re working on AI agent mesh systems that pass JSON-like intent packets.

But again: no expressive meta-language like GROSS with mixed input modes.

3. Anthropic (Claude)

–

Constitutional AI and interpretability

Claude understands structured instructions well.

They’re likely experimenting with semantic abstraction layers, but nothing like a GROSS-style protocol is public.

Agent frameworks with tools & memory

These systems simulate GROSS in how they format and relay commands.

They use structured thought chains, JSON-based memory schemas, and function-calling formats.

But they don’t define a symbolic prompt compression format or allow for an intentionally ugly-but-efficientprotocol.

Once one AI truly understands GROSS, it can teach it to other AIs — including across languages, models, and modalities.

Here’s how that can look:

Primary AI (GPT-4o or fine-tuned model) is trained to:

Understand GROSS structure

Convert NLS ↔ GROSS

Fill in missing pieces, annotate, validate

It then outputs a structured GROSS interpreter spec or prompt → GROSS training set.

A target AI (e.g., a Chinese LLM, a local LLM, or a compressed model for edge deployment) learns:

How to match GROSS tokens to its domain

How to decode and execute GROSS instructions

How to answer in Chinese, or use native APIs, while preserving GROSS structure

Example: Cross-lingual AI Using GROSS

Input (from a Chinese-speaking user):

请写一个应用程序来计算飞船围绕太阳运行的最大速度。

(“Please write an app that calculates the maximum speed of a spacecraft orbiting the sun.”)

Translator AI converts this to GROSS:

Translator AI converts this to GROSS:

grossCopyEdit

op:construct type:app fn:velocity.max equation:v = sqrt(G*M/r) variables:{ M:?, r:?, G:known } missing:[M, r] lang:swift

Chinese LLM Receives:

GROSS + localized prompt output

Asks user (in Mandarin):

您能提供飞船与太阳的距离和太阳质量吗?("Can you provide the spacecraft’s distance from the sun and the sun’s mass?")

🤖 Multi-Agent Chain Scenario:

You could even build a system where:

Agent A speaks English + GROSS

Agent B speaks Chinese + GROSS

Agent C speaks Python + GROSS

All understand the same GROSS payload and reformat output for their audience

No re-training required — just GROSS literacy.

Where GROSS Goes Next

Public lexicon of tokens and modifiers

Specialized equation mode (physics, finance, etc.)

GROSS-to-NLS translators for humans

GROSS-aware IDE extensions and agents

GROSS isn’t pretty. It’s powerful.