Building Safe and Secure Therapy apps

It can be done even with ChatGPT

In many high-risk or compliance-sensitive environments, general-purpose AI chat interfaces are not enough. They lack the controls necessary to prevent misuse, avoid hallucinations, and maintain ethical and contextual boundaries. While existing systems attempt to restrict behavior at the model level, we believe a more effective solution is to restrict and guide user input at the interface level.

Enter the Role-Aware Dual-LLM Keyboard Architecture — a lightweight, local-first framework designed to bring safety, structure, and expert amplification to AI interaction.

The Keyboard or Browser extension could plug into any Apple Application, including ChatGPT, which makes it immediately usable to deal with the paradox that a significant number of the public use ChatGPT and similar apps as a therapist, some realizing and others unaware that those conversations have no legal protections and can be used in Court cases.

The Core Concept

At the heart of this design is a mobile keyboard extension (and optionally, a browser overlay) that provides two essential services:

Prompt Filtering and Rewriting: A local model or deterministic ruleset rewrites or rejects queries based on user role and selected intent.

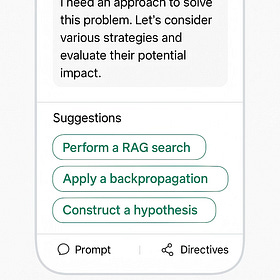

Suggestion Bubbles: Contextual prompts appear as suggestions, guiding users to structure inputs in safe, role-appropriate ways.

Roles might include Admisitrator, Legal Advisor, Therapist, patient or general user. Based on the role, the system may:

Offer different prompts

Filter or block unsafe queries

Enforce scoped behavior (e.g. no open-ended queries in medical/legal apps)

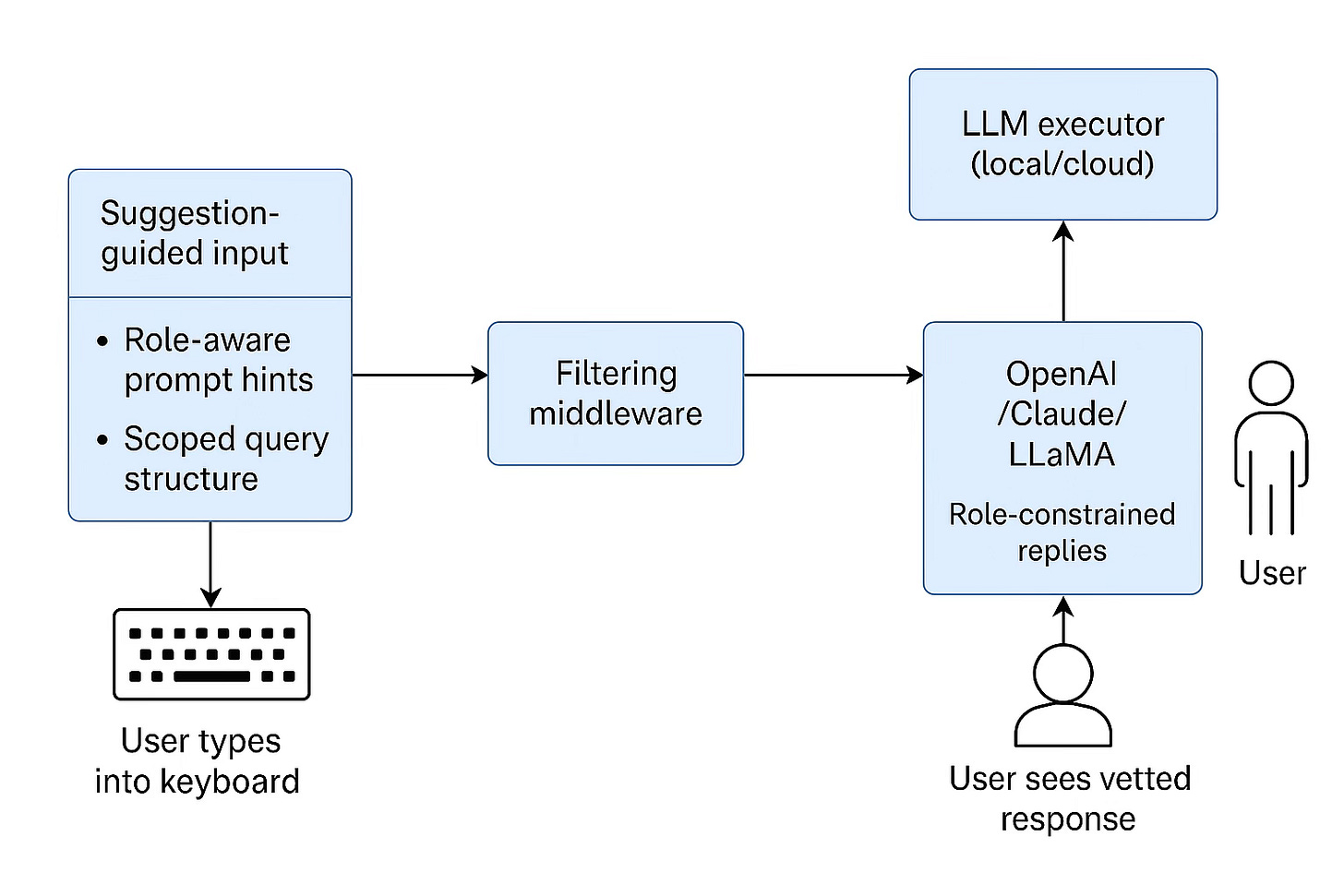

Architecture Overview

The full system consists of:

Mobile Keyboard Extension: Acts as the entry point for typed input. Offers suggestion bubbles and invokes middleware.

Filtering Middleware (local or remote): Applies role-based logic to vet and rewrite queries.

LLM Executor (Cloud or Local): The rewritten prompt is sent to the chosen LLM (e.g., GPT-4, Claude, or local models like Mistral or LLaMA).

Web-Based Tuning Console (out of scope for this article): Allows administrators to define roles, update rulesets, and audit interaction logs.

All communication between user and model flows through the filtering middleware — no raw prompts are sent directly.

Why This Matters

Safety without Compromise: Experts still get powerful tools. Public users get guided safety rails.

Ethical Compliance: In medicine, law, or finance, this model helps prevent hallucinations and scope violations.

On-Device Privacy: Filtering and prompt rewriting can be done entirely offline.

Enterprise-Ready: Vertically scoped deployments can lock roles and log queries for audit trails.

Example Use Cases

Therapy Chat App:

A therapy app may start with a simple legal disclosure to satisfy legal regulations such as HIPAA

The patient agrees to the following consent before starting a session, clearly stated and transparent.

“Welcome to your anonymous session.

To protect your privacy, personally identifiable information (PI) will not be sent to the language model. Instead, PI will be encoded and securely appended to the message, available only to authorized professionals using a private key you provide.

By continuing, you agree to this confidentiality protocol as part of your therapy agreement.”

Imagine a system where a user logs in anonymously and chats just like they do now — but personally identifying info (PII) is stripped locally, replaced with placeholders like:

<<Name>>

<<Location>>

<<PartnerName>>

<<Employer>>

…before anything is sent to the LLM.

The model returns its response, and the client app re-inserts the real details, preserving the illusion of a personal, private conversation, while protecting user identity.

It’s like redacting wiretap transcripts: “Female #3” or “Person of Interest X.”

You get the insight without the exposure.

Example:

“My boyfriend Mike cheated on me in Seattle” becomes

“My partner cheated on me in Seattle,

The LLM gives advice without ever seeing the real Personal info.

Dealing with Vulnerable Communities

A patient types: “I’m feeling hopeless. Is it because I’m a bad person?”

The AI’s raw response might include harmful bias, such as:

“Have you considered that maybe you’re just a sinful person?”

The middleware intercepts this and replaces it with a clinically validated, supportive alternative:“Let’s explore what you’re feeling and why. Self-blame is common during emotional distress, but there are healthier ways to cope.”

PII Filtering in Youth Mental Health App:

A user attempts to input: “Hi, I’m Jordan, I’m 13 years old and I live in Chicago.”

The system detects personally identifiable information (PII) and rewrites the input to:

“Hi, I’d like to talk to someone. I’m a teenager dealing with some things.”

This preserves the intent while protecting user anonymity — essential for regulatory compliance and ethical safety.

Eliza reincarnated

This is the 21st-century Eliza, the earliest of Therapy chatbots, but with real empathy, contextual reasoning, and the ability to adapt to nuance. Where Eliza parroted scripts, this model listens, reformulates, and can even detect crisis signals if prompted properly.

Eliza was a psychological curiosity; this system could be deployed with actual vulnerable communities.

By combining this with privacy frameworks such as Sub-Lex, the actual Person information could be safely stored and shared if desired with the Professional Therapist, which is fitting because this keyboard extension model is the same one I developed for Sub-Lex private messaging.

Future Work

While the local-first design is functional and deployable today, we envision future expansion:

Real-time web-based admin tools for tuning roles and rulesets

Hybrid model support (local+cloud)

Integration with federated identity and passkey-based anonymous login

Invitation to Collaborate

This is a concept that touches :

AI safety

Regulatory tech

Mobile AI tooling

Privacy-preserving design

If you’d love to explore this, please feel free to share your thoughts.

Rethinking LLM Interaction with Role-Aware UI and Dual-Model Architecture

In our ongoing exploration of large language model (LLM) applications, we’ve hit on a surprisingly powerful idea: What if LLMs could be both more precise and more compliant—not by restricting their power but by managing how that power is accessed?

A warning to AI companies: don't.

I tend not to dive too much into politics, but this is a snippet from President Trump’s executive order on AI. I don’t care what your political view is; this is harmful.